Contact Us

CiiS Lab

Johns Hopkins University

112 Hackerman Hall

3400 N. Charles Street

Baltimore, MD 21218

Directions

Lab Director

Russell Taylor

127 Hackerman Hall

rht@jhu.edu

Last updated 05/16/2011

The main goal of this project is to develop a simple yet resourceful application for the Apple iPad that allows remote configuration of surgical robot components. When fully implemented, the application will serve the role as a mobile, centralized, ergonomically designed tablet with which the surgical team can dynamically change settings of the robot. The targetted system to test this device is the EyeRobot.

Student Members: Hanlin Wan, Jonathan Satria

Mentors: Balazs Vagvolgyi

Other Collaborators: Russell Taylor

An operating room that utilizes a robotic surgical assistant often requires several computer workstations, each controlling different devices or aspects of the robot-human interface. This decentralized approach places both ergonomic and efficiency constraints to the surgeon and his or her team. Practically speaking, individuals are required to approach the correct component workstations, which often placed several meters away from the machine if not in another room, and input the correct settings. This certainly poses disadvantages, which a central command module could dramatically improve upon. The current input method of keyboard and mouse also poses some disadvantages – besides being difficult to sterilize and clean, it is certainly not appropriate for the fast-paced operating room, especially when touchscreens have become much more widely available.

Through the iPad, our application will provide an easy to use interface with which to control several component modules. As a portable device, it will also allow easy access of the robot components to several members of the surgical team.

Our specific aims are:

This project attempts to use all of these tools to build a functioning iPad Mobile Surgical Console. The iPad will initially connect to the Scenario Manager in order to find all the components. Then, these components will be controlled from the iPad through the ICE interface. But first, the challenges involved in compiling the cisst library in the iOS environment will need to be resolved.

We had a successful test with the EyeRobot in a bunny experiment on April 21, 2011. The iPad interface worked perfectly. The users found our device to be much easier to use and a huge time saver. Furthermore, they gave us ideas to improve the GUI, which we are currently working on. Here is a video of it in use.

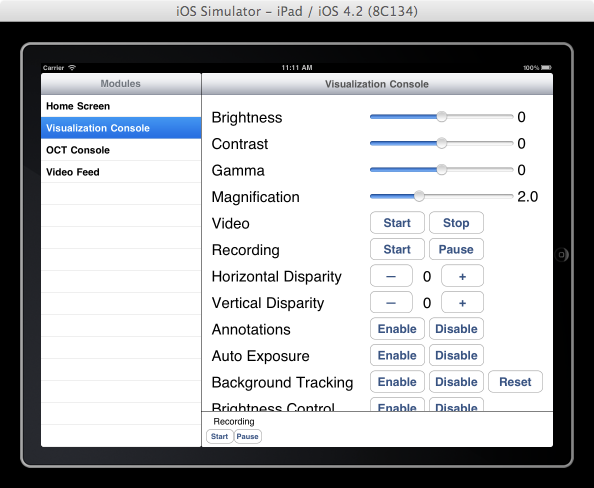

Below is a screenshot of the visualization console where the user can set various settings for the camera.

Click here to see full documentation and tutorials on how to write programs for the iOS using the cisst libraries. This page is currently private and under construction.

ICE Source Code - for compiling ICE and cisst for the iOS environment

XCode Project Source Code - for the iPad application

NOTE: In uploading media, upload them to your own name space or to private sub-namespace, depending on whether the general public should be able to see them.

Here give list of other project files (e.g., source code) associated with the project. If these are online give a link to an appropriate external repository or to uploaded media files under this name space.